Blog

How VMotion Works! (VMotion Explained)

[adinserter name=”TopOfPost”]You have to admit that VMWare created some really cool technology back in 2003 when they released VMotion. So before we talk about how VMotion works, let’s quickly cover what it actually is! I still routinely run into people who have no idea that VMotion exists, and when they find out what it does they are always amazed that such a feat is even possible, much less practical! Since my project on building two 1U servers for ESXi, I’ve gotten a lot of question on “How does VMotion work?”

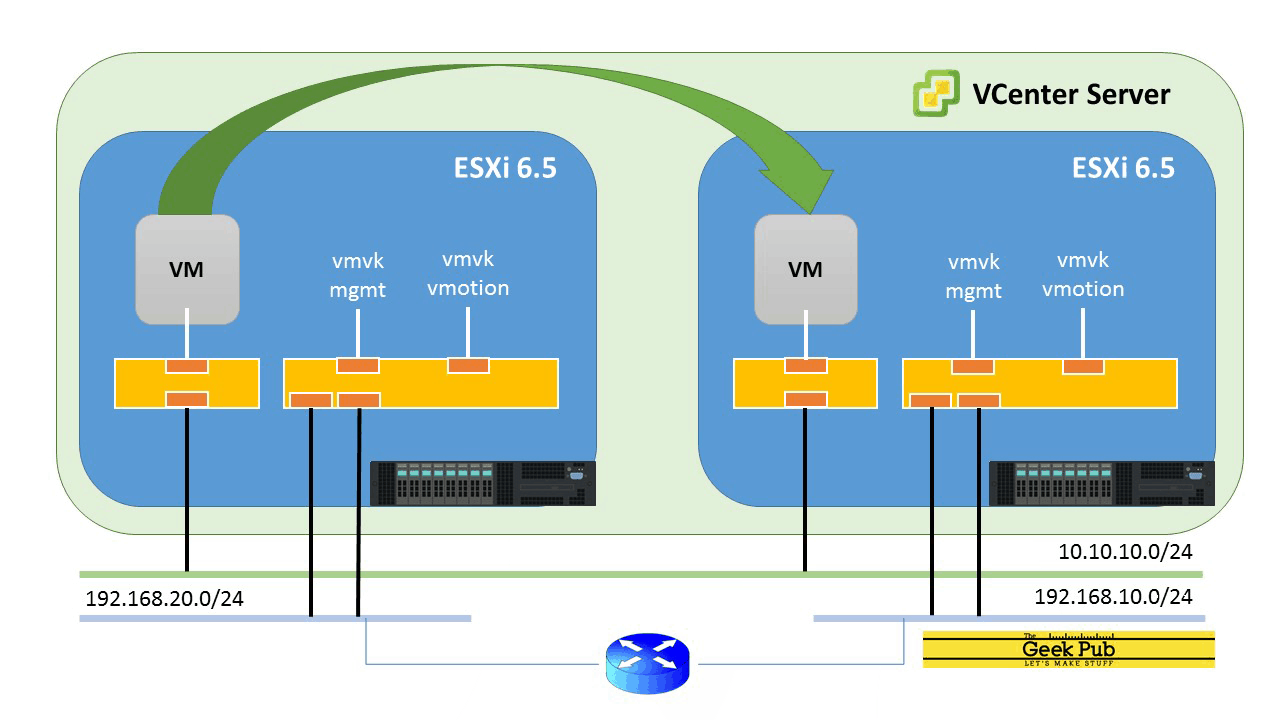

VMotion is VMWare’s technology that enables a virtual computer to be moved from one physical host server to another, while it is running and with no interruption in service. This technology is sometimes called “live migration”. In fact, Microsoft has a similar technology in the Hyper-V stack known as, you guessed it: Hyper-V Live Migration.

There are some amazing benefits to VMotion and Live Migration. Since a server can be moved to completely different hardware while it is running, without downtime the underlying hardware can be swapped out, or taken down for maintenance without the end-users or other applications being affected, or even knowing it has happened. This has really changed how we handle system maintenance and outages in modern compute environments. Before this technology became commonplace in data centers, outage windows had to be negotiated with customers and servers had to be taken off-line for hours to replace or upgrade hardware. In the VMotion world, new hardware can be built and the virtual server moved without any downtime. So how does VMotion work? Let’s find out!

How VMotion Works

So let’s move on to how VMotion actually works. When explaining how VMotion works, its not uncommon that I say to myself that “…it is quite amazing that as complicated is it is (and yet so elegantly simple) that it actually works.” There were definitely some big brains working at VMWare, and most of it can be attributed to Mike Nelson who led the VMotion project.

The Storage Subsystem Plays the First Key Role

Unlike your standard desktop or standard physical server, the hard disk of a VMWare server is not generally located on a physical disk inside the server. Instead the server’s hard drives are also virtualized and set either on a Storage Attached Network (SAN) via FibreChannel or iSCSI, or mounted on a Network File System (NFS) volume on Network attached Storage (NAS). While there are other technologies that VMWare can use (VSAN, Hyper-Converged computing, or even storage migration), we are going to focus on this most common configuration.

With the server’s disk virtualized and encapsulated on the network, VMWare uses a Virtual Machine FileSystem (VMS) file, which can be shared with multiple physical servers running VMWare’s ESXi bare-metal hypervisor. These physical servers all work together to share read-write access to this virtual hard drive. That alone is a pretty amazing feat when you think about the logistics of it!

Before the VMotion begins a “shadow copy” of the VMware guest’s OS configuration is created on the receiving ESXi host, but is not yet visible in VSphere. This ghost is just a shell which will receive the memory contents next.

The Memory Manager Plays the Second Key Role

The next big piece of understanding how VMotion works is related to the memory management within VMWare. Since the virtualized computer’s memory is also mapped and virtualized it allows VMWare’s VMotion to do something amazing. VMotion takes a snapshot of the system RAM, a copy if you will, and starts the rapid transfer of this memory over the Ethernet network to the chosen host computer. This includes the states of other system buffers, and even the video RAM. VMWare engineers refer to this original snapshot as a “precopy”.

While this snapshot is being transferred VMotion maintains a change log buffer of any changes that have happened since the original snapshot was made. This is where faster network speeds are better, allowing a faster VMotion to occur!

VMotion will continue to make make and copy these change buffers (and integrate them into memory) to the receiving host until the next set of change buffers is small enough that it can be transferred over the network in less than roughly 500ms. When that occurs, VMotion halts the virtual CPU(s) execution of the guest virtual machine, copies the last buffer and integrates it into the guest’s OS virtual RAM. VMotion discontinues disk access on the sending host, and starts it on the receiving host. Lastly VMotion starts the virtual CPU(s) on the receiving machine.

The Virtual Switch Network Plays the Final Role

After the virtual CPU(s) are started, VMotion has one final task at hand. VMWare ESXi runs and controls one or more virtual switches on the local network. This is what the virtual network adapter on the guest OS connects to. And just like any other network, physical or virtual, all of the switches maintain a map of network MAC addresses and what port they are connected to. Since the machine is likely no longer connected to the same physical switch, VMotion instructs the ESXi hosts network subsystem to send out a Reverse ARP (RARP) on the receiving host. This causes all of the switches, both physical and virtual to update the mappings so that network traffic will arrive at the new host, rather than the old.

Now if you’re like me, you are shaking your head in awe. It’s some pretty amazing tech!

Some Interesting VMotion Notes

If this gets you thinking there might be some caveats to VMotion, you are right. Here’s a few things to chew on:

- The busier the guest operating system is, the more memory pages are likely to change. This will make a VMotion take longer. Although I’ve never seen it, it would theoretically be possible for VMotion to fail if memory on the guest were changing at a rater faster than could be transferred over the network.

- VMotion will check the remote system to make sure there is enough RAM and CPU before it begins the process. If VMotion can’t be 100% certain that it will succeed it won’t begin. However, if for some reason a VMotion does fail in mid-transfer it will abort with no impact to the original virtual machine’s uptime.

So now you know how vmotion works! And yes, those VMware engineers are definitely some smart people!

Thanks for the info Mike! You’re seriously a genius. This is something I have wanted to know for a while. I’d call the engineers at Vmware “elegant.”

This is the first explanation of VMotion I can actually understand. Thanks for writing this Mike!

It’s amazing that something like this actually works at all. Super cool! Some really smart people figured this out. Way smarter than me!

Thanks, this is an awesome explanation!

4.5

4

3

0.5

5

Still relevant information in 2022. Great article and awesome break down!

It’s 2025 and I find this fascinating AF.